How do I create AI-optimized pages for my website?

- Also asked as:

- How do I make pages that AI platforms will read and cite?

- Can I build webpages designed for AI agents?

Scrunch recommends creating AI-optimized webpages in two ways: Update existing content via Optimizer and deliver AI-optimized versions of page content via the Agent Experience Platform (AXP).

Additional context: AI-optimized pages are clean, server-rendered HTML with structured summaries, clarified definitions, and no JavaScript dependency—designed for how AI agents actually read and parse content.

Example

For example, imagine a Scrunch user wants to make their product pages more visible in AI search. They have two paths depending on their goals and resources:

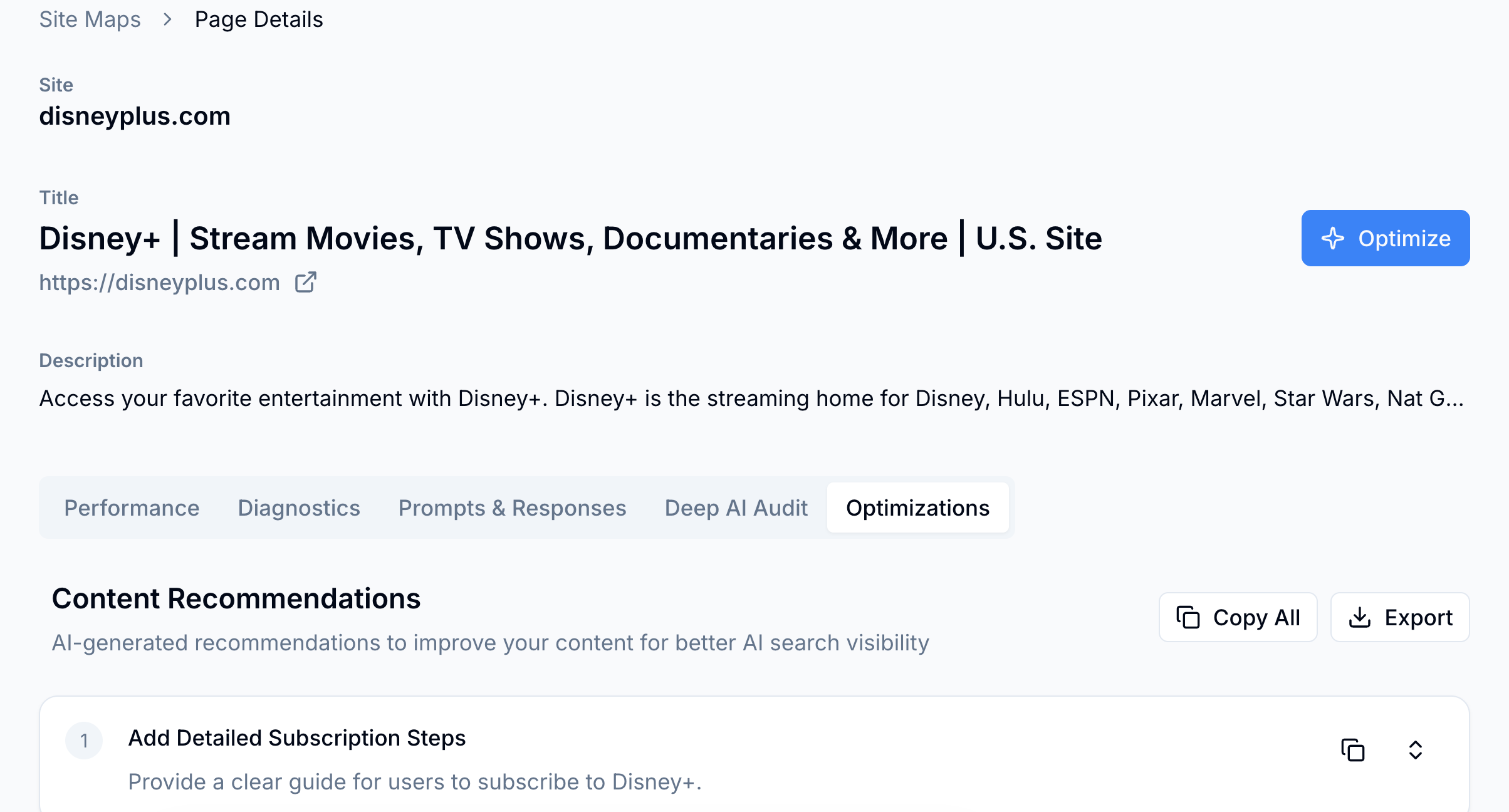

1. Optimize existing content: Use Optimizer recommendations to update content manually or let Optimizer restructure pages automatically. Both improve metadata, structure, and content density for AI consumption.

2. Deliver an AI-optimized version directly to LLMs: Enable the Agent Experience Platform. AXP detects AI agent traffic at the CDN level, then serves a clean HTML version while humans see the original site.

Optimizer improves underlying content quality. AXP makes content AI-friendly while preserving the human experience.

Follow-up question: Do I need to rebuild my website to create AI-optimized pages?

No, AXP works at the CDN level to serve an AI-optimized version automatically—no code changes, redesigns, or framework migrations required. Existing pages stay intact.

Related FAQs

What benchmarks or baselines are useful when evaluating AI search performance?

Scrunch recommends tracking brand presence, citations, referral traffic, AI agent traffic, and share of voice versus competitors as key performance indicators.

How can I see if my visibility in AI search is improving or declining over time?

Scrunch recommends monitoring AI search trend data like brand mentions and citations consistently over 2-3 week periods to identify real trends versus one-off changes.

How many prompts should I track for AI search?

Scrunch recommends estimating how many prompts to track for AI search using the following approach: X [# of topic clusters] x Y [12-15 questions related to each topic cluster] = Z [# of AI search prompts to track]. The primary goal is to get a representative sampling of data across all customer journey stages via a mix of branded and non-branded prompts.