TLDR:

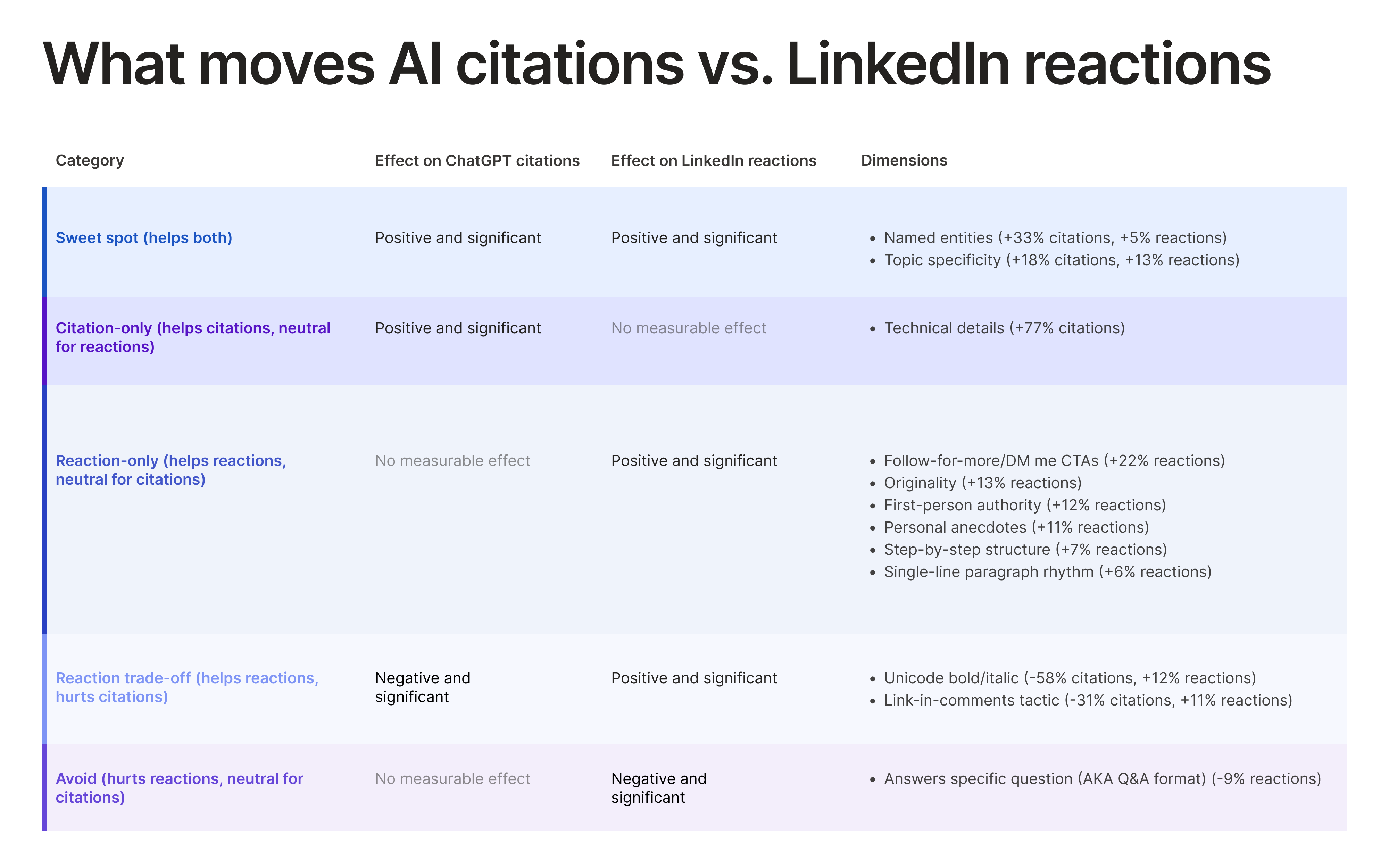

- ChatGPT cites LinkedIn posts based on content, not social proof—reaction counts have near-zero effect on whether a post gets cited.

- Technical details, named entities (aka companies, people, products, etc.), and topic specificity make LinkedIn posts more likely to be cited by ChatGPT.

- Unicode formatting and link-in-comments posts both hurt citation chances while lifting LinkedIn reactions.

There’s been a lot of talk recently about LinkedIn gaining traction in AI search citations. Naturally, a lot of that talk happens on LinkedIn.

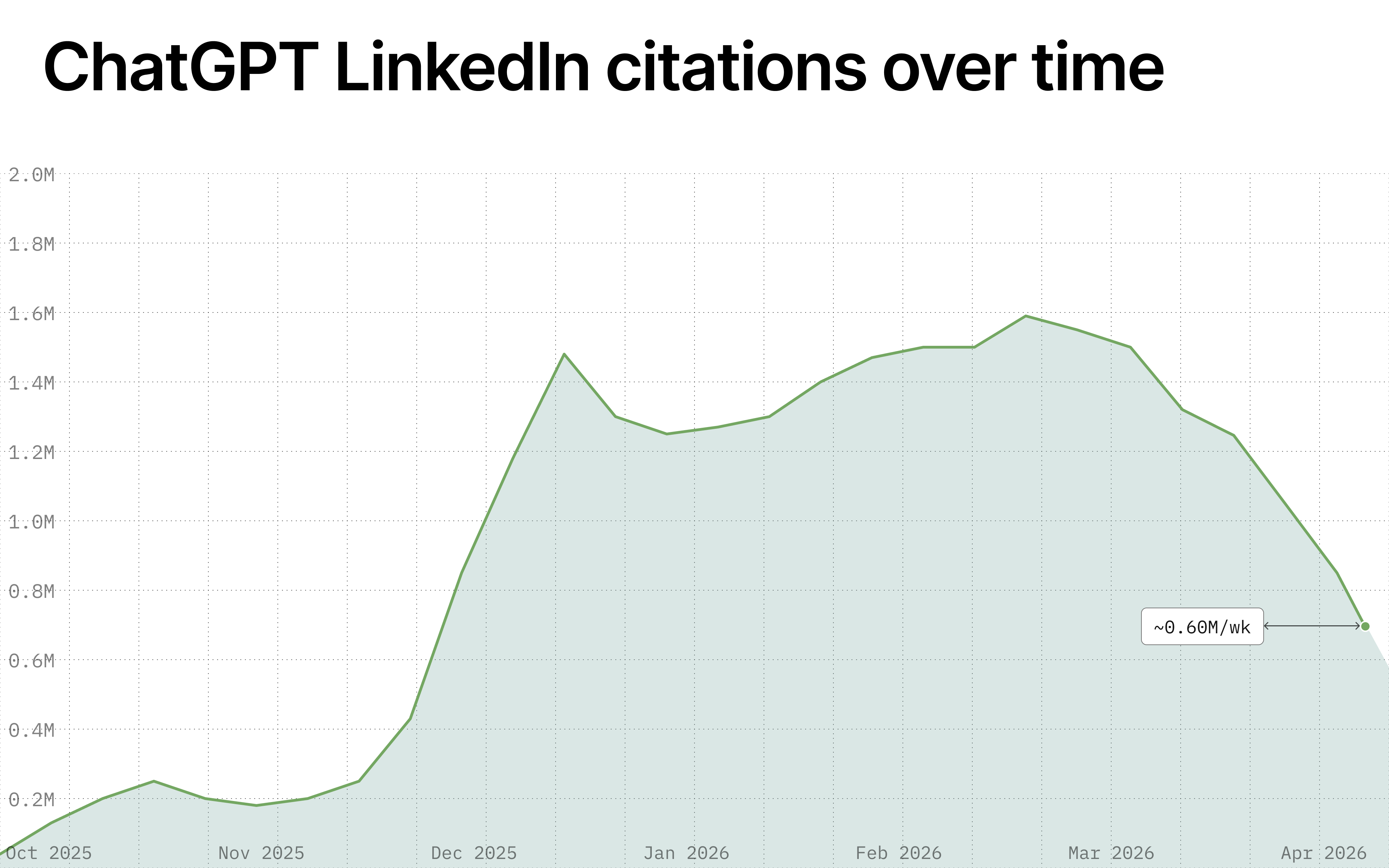

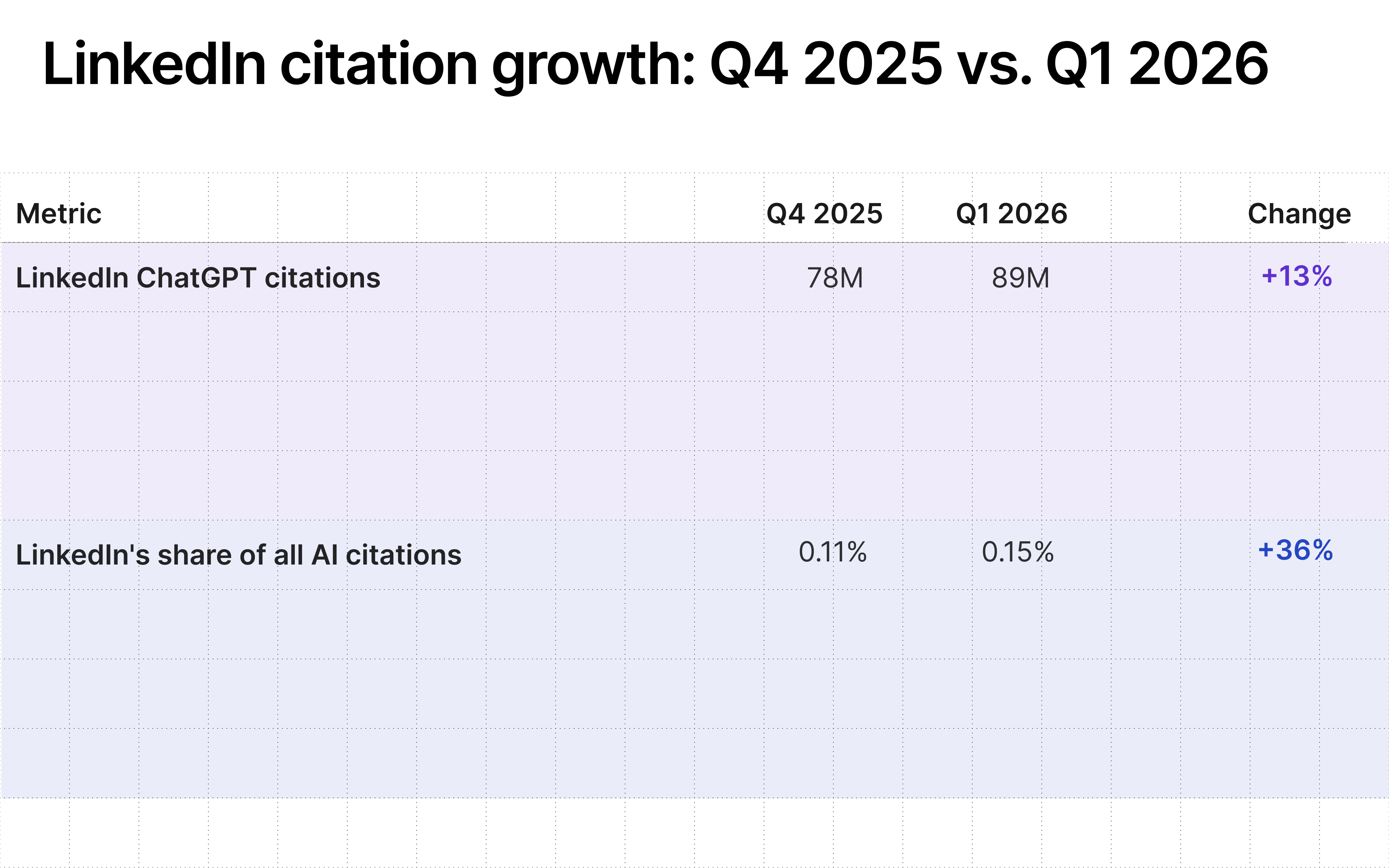

Our research shows that LinkedIn’s piece of the citation pie does appear to be growing.

AI platforms cite LinkedIn content roughly 8 million times per week in the US for industry and commercial prompts (aka the kinds of questions people ask when they’re researching products and services or making purchase decisions). And the volume was increasing 13% month over month as of Q1 2026.

If your audience is searching via ChatGPT, Perplexity, or other AI assistants, more and more of the answers they see are shaped by LinkedIn content.

But the devil, as always, is in the details. Simply posting on LinkedIn doesn’t automatically mean that your brand will show up more in AI answers.

We set out to find out what actually moves the needle by analyzing LinkedIn observations and citations in ChatGPT. You can dig into our findings below.

The main takeaway for brands: The playbooks for winning LinkedIn engagements and AI citations overlap more than you might expect—but they also diverge in key areas.

Why is our analysis focused on ChatGPT?

ChatGPT is the most widely used AI chatbot in the world. It's also the platform where we have the most statistically meaningful dataset to pull from in terms of both LinkedIn post consideration and citation.

We touch on data related to other platforms later in our analysis, but we wanted to index on the strongest subset of data as it pertains to LinkedIn content retrieval candidates.

ChatGPT is searching for answers, not scrolling your feed

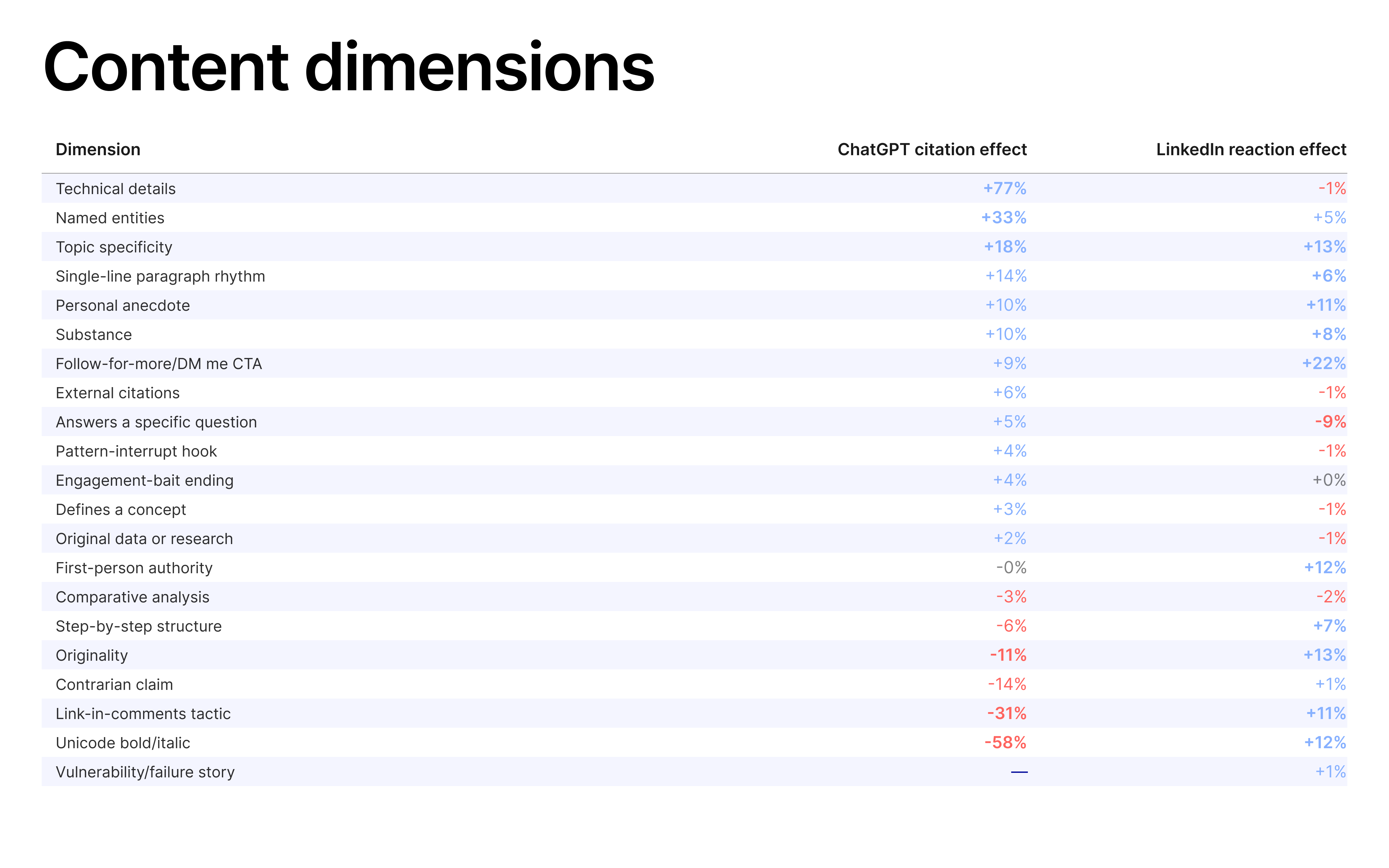

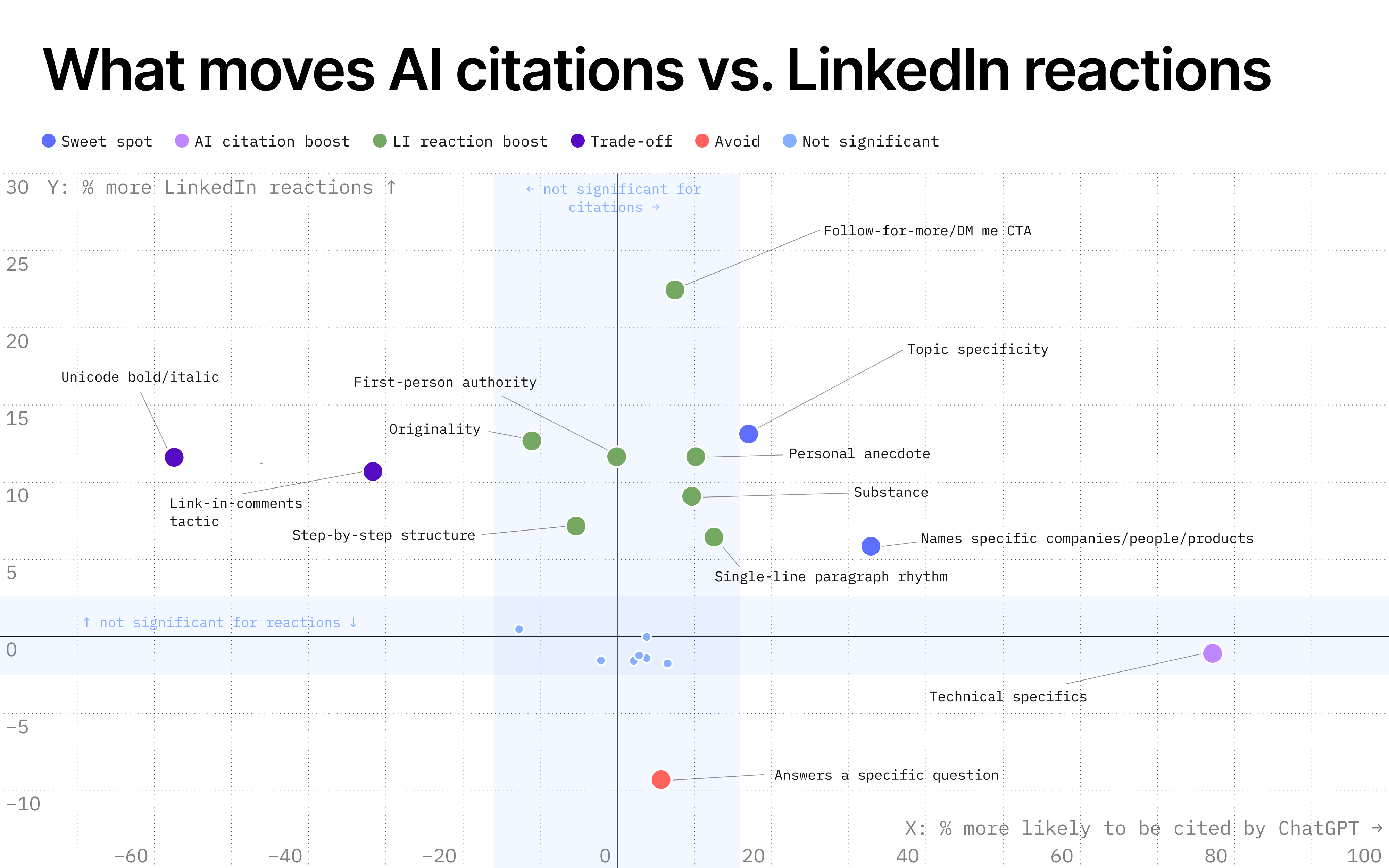

We tested 21 LinkedIn content dimensions—think things like contrarian takes, original research, single-line paragraph breaks, and so on (you can see the full list of content dimensions at the bottom of this post).

We then categorized these dimensions based on whether they help, hurt, or leave unchanged ChatGPT citations and/or LinkedIn reactions.

The scatter plot above shows every dimension. Ones that didn't really move either outcome after statistical correction are shown as small, light-blue dots (these include things like pattern-interrupt hooks, engagement-bait endings, etc.).

These aren’t negative; they just don't have a meaningfully measurable effect on either citations or reactions using our causal model once we control for everything else.

In terms of what does have an impact, three features clearly stand out when it comes to citations: technical details, named entities (think naming specific companies, people, products, etc.), and topic specificity.

All three work for AI because they make your post matchable to real questions.

Bonus: Two out of the three also boost LinkedIn reactions.

Engagement doesn’t drive citation

You may be wondering: Does ChatGPT just cite whatever’s popular on LinkedIn?

We tested this directly. After controlling for content dimensions, post age, and prompt difficulty, a post's reaction count has near-zero predictive power over whether ChatGPT cites it.

If you hold the content constant, a post with 100 reactions is cited at essentially the same rate as one with 10,000. ChatGPT selects on content, not social proof.

Technical details make a post 77% more likely to be cited (and have no effect on LinkedIn reactions)

This is the dominant finding for AI citations specifically—and it's not close. Posts with specific technical details are 77% more likely to be cited by ChatGPT.

When it comes to reactions, technical details do essentially nothing. The reaction point estimate for these posts is slightly below zero, but not statistically distinguishable from zero.

In other words: Adding technical details to your posts won't rack up likes on LinkedIn, but it won't cost you reactions either.

Keep in mind: This is not about writing literal code in your LinkedIn feed. It's about displaying the depth and specificity of what you know.

Example:

| Generic post | Technical post |

|---|---|

| "AI is transforming how we think about data pipelines." | “After running 200+ Airflow DAGs at scale, the three failure modes we see most are executor memory pressure during backfills, cross-DAG dependency deadlocks, and sensor timeout cascades on S3 event triggers.” |

Named entities make a post 33% more likely to be cited (and 5% more likely to be reacted to)

Naming specific companies, people, products, frameworks, and tools helps in terms of both AI citations and LinkedIn reactions.

You don’t have to @-tag a company or person for this to work for AI; ChatGPT reads the text of the post, not the LinkedIn mention graph. Typing names in plain text counts for citations, too.

Tagging is fine, but it's the name appearing in the post that makes the content findable for AI.

Example:

| Vague post | Named post |

|---|---|

| “There are several great project management tools on the market." | "Notion vs. Linear vs. Asana: how their automation engines actually differ for teams over 50." |

Topic-specific posts are 18% more likely to be cited (and 13% more likely to be reacted to)

Writing about a specific niche rather than a broad trend is the strongest sweet-spot content dimension (aka things that help with both citations and reactions).

We scored specificity on a 1–5 scale (1=broad, 5=very niche)—very niche posts won at both citations and reactions.

It makes sense: Topic specificity helps ChatGPT find content for the right queries and also helps LinkedIn users find content that's relevant to them.

Example:

| Broad post | Specific post |

|---|---|

| "The future of fintech is exciting." | "Embedded lending is changing unit economics for vertical SaaS platforms serving auto dealerships." |

While there are certainly practices that can increase LinkedIn reactions while hurting AI citations (more on that below), we found that the inverse isn’t true.

The nearest candidate is technical details, as mentioned above, but it has no real measurable impact on reactions and nothing else even gets as close.

Some LinkedIn tricks tank ChatGPT citations

Not every LinkedIn best practice translates to AI search—and some actively work against you.

The two tactics below boost LinkedIn reactions while hurting your citation chances. Whether that trade-off is worth it depends on what you're optimizing for.

Posts with Unicode formatting are 58% less likely to be cited (but 12% more likely to be reacted to)

LinkedIn's post editor doesn't support native bold or italic type, so authors have turned to a workaround: 𝗯𝗼𝗹𝗱 and 𝘪𝘵𝘢𝘭𝘪𝘤 text built from unicode mathematical-alpha characters (U+1D400–U+1D7FF). It's visually indistinguishable from real formatting.

On LinkedIn, it works—unicode posts earn about 12% more reactions.

But ChatGPT is 58% less likely to cite them. The effect is large enough that it can't plausibly be explained by unmeasured confounders—any hidden factor would need to be ~4.6x stronger than anything else we measured to explain it away.

Note: This appears to be a ChatGPT-only issue. OpenAI’s chatbot doesn’t apply Normalization Form Compatibility Decomposition, a standard unicode procedure that decomposes mathematical-alpha characters back to their ASCII equivalents.

We ran the same detection across other major AI platforms over the same 90-day window. Results seemed to isolate the issue to ChatGPT.

For example:

- Perplexity: No statistically significant penalty despite thousands of unicode results in our sample

- Google AI Mode/AI Overviews: Zero unicode in snippets across 390K observations (direct Gemini embedding tests suggest unicode-bold and ASCII versions are treated as near-equivalent)

The fix is one line of code (unicodedata.normalize('NFKD', text)) at indexing. Until OpenAI ships it, unicode-formatted content is effectively invisible to ChatGPT's retriever bot.

Keep in mind that this doesn’t just affect posts. The unicode penalty applies to any page ChatGPT is retrieving. That means your LinkedIn profile takes the same hit.

LinkedIn names and headlines commonly use unicode formatting—a bolded “Data Leader at Acme Corp.” title looks polished in the feed, but to ChatGPT's search, it's a string no one will ever query.

The same logic hits Instagram bios, Facebook pages, press releases copy-pasted from formatted sources—anywhere the raw HTML carries math-alpha characters in key terms.

If you want to be findable to AI, write in plain text. Save the unicode formatting for places where only humans are reading.

Link-in-comments posts are 31% less likely to be cited (but 11% more likely to be reacted to)

Posts that use the link-in-comments (LIC) tactic—that is, telling readers that the real content is down below—are 31% less likely to be cited by ChatGPT.

The problem is that your post becomes a pointer, not a source. ChatGPT prefers self-contained sources, and a post that explicitly says "real content elsewhere" signals the opposite.

But here’s the twist: The link-in-comments strategy does work, just for the source you're promoting instead of your post.

URLs posted in the comments get cited by ChatGPT 47% of the time (2x the baseline rate for a typical source). But the LinkedIn post itself only gets cited 13% of the time (about 45% less than a typical source).

In short: LIC posts direct AI traffic to different content.

But it gets more interesting: We tested whether the presence of an LIC post in the set of candidate sources ChatGPT retrieves for a query helped the linked source get cited.

It does—dramatically. For a given prompt, if both the LIC post and the source it's promoting appear in ChatGPT's retrieval set, the source's citation rate jumps from 24% to 59%.

The LIC post itself may or may not get cited. What matters is that it appears alongside the source during ChatGPT’s retrieval, which seems to reinforce ChatGPT's confidence in the source.

Through this lens, link-in-comments posts could be seen as a deliberate promotional strategy: Sacrifice your own post's citability (−31%) to massively boost the thing you're promoting (+144%).

If you're using LinkedIn to drive AI traffic to your own website, this trade-off might be well worth it.

We should also note that these types of posts correlate with ~11% more reactions. Our guess is that this is indirect: The tactic drives comment activity, and LinkedIn's feed rewards posts with active comment threads by distributing them to more people, which yields more reactions.

Most of the LinkedIn engagement playbook won't hurt you

Several of the most effective LinkedIn engagement tactics have no measurable effect on AI citations—positive or negative.

They just do what they've always done: Help your content resonate with the humans reading it.

You’re free to use these tactics (some to the chagrin of LinkedIn users everywhere):

- Follow-for-more/DM me CTAs (+22% more reactions): strongest engagement driver by far

- Originality (+13% more reactions): distinctive perspectives stand out in the feed

- First-person authority (+12% more reactions): writing from professional expertise

- Personal anecdote (+11% more reactions): the classic LinkedIn storytelling play

- Step-by-step structure (+7% reactions): breaking things down sequentially

- Single-line paragraph rhythm (+6% reactions): replacing walls of text with line breaks

Know what you’re optimizing for and why

LinkedIn and AI search can serve similar business purposes, but they're two different worlds with sometimes different rules. The right strategy depends on what you're trying to accomplish.

Founder building a personal brand? You're playing for reach and followers, not AI citations. Same goes for recruiters, keynote speakers, or anyone else whose success is measured in LinkedIn metrics.

But if you're a marketer trying to shape what AI says about your brand, the calculus shifts. Technical depth, named entities, and topic specificity do the heavy lifting. The engagement tactics that juice reaction counts won't necessarily help you—and a couple of them will actively hurt you.

Most brands (especially B2B orgs) want better performance across both LinkedIn and AI search. Which means the question is less, "LinkedIn or AI?" and more, "What does this specific post need to do?"

The point is to know which game you're playing before you write the first word.

Since our customers are concerned with AI visibility, here are a few AI-search-centric tips to help you on your journey:

Find out what questions people are actually asking AI

Whether you’re trying to show up via LinkedIn content or some other method, you need to know what your audience is really asking, which topics have growing demand, and where there’s a content gap to fill.

Scrunch helps you do all of the above with our Trends feature.

Learn which AI search topics in your product category have the highest volume, which ones are growing, and which ones should be prioritized to maximize brand impact.

Let time (and the model) tell the full story

Measure a stable prompt set over time across the AI platforms that matter to your brand and update your strategy accordingly.

As the image above shows, when it comes to ChatGPT specifically, the rate of LinkedIn-based AI citations appears to have dipped in April 2026. Based on our preliminary research, it seems to be a change to the ChatGPT algorithm (perhaps they were testing something?) and not reflected in surfaces like Google AI Overviews or AI Mode.

It’s important to understand that AI search is not monolithic. Different platforms behave differently—and what worked yesterday may not work tomorrow.

Focus on the opportunities that really matter

Learn which citations are shaping answers to the prompts you care about, not just in general.

Aggregate data can be compelling but misleading. Whether or not LinkedIn is especially important to AI visibility for your brand depends on a lot of factors (check out our industry breakdown of the most-cited AI sources here).

Scrunch’s monitoring product helps you see exactly which sources are cited for business-relevant prompts—and whether those sources are coming from you, your competitors, or a third party.

You can learn which ones are most influential across every facet that matters to your business: topic, persona, funnel stage, AI platform, and more.

Don’t sleep on other citation strategies

LinkedIn content may be a going (and growing) concern for AI citations, but it’s far from the only game in town.

Owned content on your website, placement in third-party sources, syndicated content across news outlets—there are lots of ways to help your brand show up in the AI answers that matter to you.

Scrunch can help you identify, prioritize, and take action on these opportunities at scale, both through our own technology and our partnerships with solutions like Noble and Stacker.

Here’s your AI citation/LinkedIn engagement cheat sheet

You can download the reference checklist below here.

To optimize for AI citations:

- [ ] Include technical details: Architecture, specs, implementation comparisons, etc. (+77% citations)

- [ ] Add named entities: Name specific companies, people, products, etc. (+33% citations)

- [ ] Write about niche topics: Specificity helps for prompt matching (+18% citations)

- [ ] Avoid the link-in-comments tactic—unless you want to drive AI to your linked source instead (-31% citations)

- [ ] Write in plain text, not unicode: 𝗯𝗼𝗹𝗱 and 𝘪𝘵𝘢𝘭𝘪𝘤 characters are invisible to ChatGPT's search (-58% citations)

To optimize for LinkedIn reactions:

- [ ] Include follow-for-more/DM me CTAs: Strongest engagement driver (+22% reactions)

- [ ] Be original: Readers reward distinct perspectives (+13% reactions)

- [ ] Apply first-person authority: Write from direct experience and expertise (+12% reactions)

- [ ] Use personal anecdotes: Everyone loves a good story (+11% reactions)

- [ ] Utilize step-by-step structure: Humans are suckers for systematic progression (+7% reactions)

- [ ] Employ single-line paragraph rhythm: Short, snappy sentences are easier to read (+6% reactions)

Maximize the citation/engagement sweet spot:

- Named entities help both citations (+33% citations) and reactions (+5% reactions)

- Topic specificity helps both citations (+18% citations) and reactions (+13% reactions)

Avoid useless tactics:

- AI may love a good FAQ, but not on LinkedIn: Posts structured around one specific question have zero citation payoff and hurt reactions (−9% reactions)

Brands today are writing for machines, not just humans.

The overlaps are real. So are the trade-offs. When it comes to LinkedIn, now you know which is which.

Be the answer in AI search with Scrunch

Track and take action on AI search citations. Start a 7-day free trial or get in touch to see how Scrunch can help you show up in the AI-first customer journey.

A quick note on our methodology:

We analyzed 12,000 LinkedIn post observations from ChatGPT between January 15 and April 15, 2026—including posts that ChatGPT considered relevant while answering real questions but chose not to cite. Every "not cited" data point indicated the post is relevant but ChatGPT decided not to use it.

We then analyzed ~4,000 LinkedIn posts to analyze citations and reactions, controlling for author audience size and how long the post has been live. "Reactions" here means the full LinkedIn reaction set (Like, Celebrate, Support, Love, Insightful, Funny) counted on the post itself, not reactions on the comments underneath.

Every post was scored on 21 content dimensions using an AI annotation model. Causal estimates use double machine learning (Chernozhukov et al.), a method that estimates each content dimension’s effect while simultaneously controlling for every other measured dimension.

Posts were annotated by gpt-5-mini with high reasoning effort across the 21 dimensions. Boolean features are yes/no; ratings are 1–5 scales.

Here are all 21 dimensions we tested, sorted by effect on ChatGPT citation: